How to Objectively Evaluate Search Results for a Brand

👉 Related Topics:

- Why Rankings Alone Don’t Reflect Search Reputation

- How to Measure Search Reputation Over Time

- How to Compare Brand Search Presence

The Problem No One Clearly Defines

Two SEO analysts look at the same search results — and reach completely different conclusions.

One says:

“This looks fine.”

The other says:

“This is a serious reputation issue.”

So who’s right?

The uncomfortable truth is: there is no standardized way to evaluate search results for a brand.

And that’s a much bigger problem than most people realize.

What We Actually Mean by “Problematic” Search Results

When people talk about “bad” search results, they usually mean things like:

- Negative news articles

- Complaint forums or reviews

- Outdated or misleading content

- Low-quality or spammy pages ranking highly

But here’s the issue:

👉 None of these are objectively defined.

What counts as “bad”?

- Is one negative article acceptable?

- What if it ranks #1?

- What if it’s from a high-authority site?

There’s no consistent framework — only interpretation.

How It’s Currently Done (And Why It Doesn’t Scale)

In most SEO or ORM workflows, evaluation looks like this:

- Search the brand name

- Manually review the top results

- Make a judgment call

That judgment is based on:

- Personal experience

- Intuition

- Context familiarity

This approach has three major flaws:

1. It’s Subjective

Different analysts will weigh signals differently.

- One might prioritize sentiment

- Another might prioritize authority

- Another might ignore certain sources entirely

👉 Same data → different conclusions

2. It’s Not Repeatable

If you run the same analysis a week later:

- Will you reach the same conclusion?

- Will another team member agree?

👉 There’s no guarantee.

3. It Doesn’t Scale

For one brand, manual evaluation is manageable.

For 50 brands?

For 500 keywords?

👉 It quickly becomes:

A bottleneck of human judgment

The Hidden Cost: Communication Breaks Down

This subjectivity doesn’t just affect analysis — it affects business.

Clients ask:

“Is our search presence improving?”

And the answer often sounds like:

- “It’s a bit better than before”

- “We’ve pushed down some negative results”

But there’s no:

- measurable baseline

- consistent metric

- clear comparison

👉 Which makes trust harder to build.

What’s Missing: A Structured Evaluation Layer

The core issue is simple:

We are trying to evaluate complex search environments without a structured model.

What’s needed is:

- A way to aggregate signals across search results

- A method to weigh different factors consistently

- A framework to produce comparable outputs

In other words:

👉 Move from raw observation → to structured interpretation

→ Learn more about how a structured approach works

A Different Way to Think About Search Results

Instead of asking:

“Does this look bad?”

We should be asking:

- What signals are present in the search results?

- How frequently do they appear?

- What patterns emerge across the page?

When viewed this way, search results become:

👉 A system of signals — not just a list of links

Why This Matters More Than Ever

As digital presence becomes more complex:

- More content is generated

- More sources appear

- More narratives compete

Manual interpretation becomes:

👉 Less reliable

👉 Less scalable

👉 Less defensible

Toward a More Objective Approach

A structured, signal-based approach could make it possible to:

- Evaluate search results consistently

- Compare different brands objectively

- Track changes over time with clarity

Not by replacing human judgment —

but by making it more grounded and repeatable.

Final Thought

Right now, evaluating search results is treated as an art.

But as the stakes grow, it needs to become:

A system.

About This Perspective

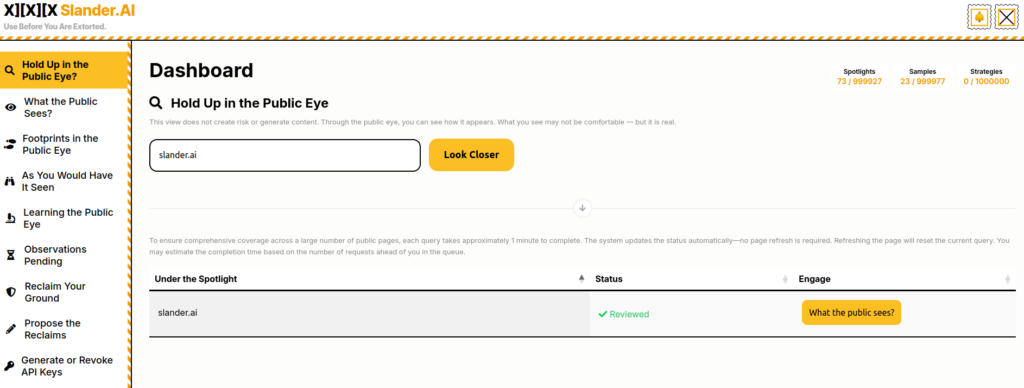

This is exactly the type of problem we’ve been exploring at Slander.ai —

how to turn fragmented search signals into structured, interpretable insight.

→ Explore real-world applications

→ Why rankings don’t reflect search reputation?

FAQ

Q: How do you evaluate search results for a brand?

Evaluating search results involves analyzing patterns across the SERP, including sentiment, source credibility, and recurring narratives — not just rankings.

Q: What makes search results problematic?

Search results may be problematic when negative, misleading, or low-quality content dominates high-ranking positions, especially from authoritative sources.

Q: Is there a standard way to measure search reputation?

Currently, most evaluations rely on manual judgment. There is no widely adopted standardized framework.

Q: Can SERP quality be measured objectively?

Traditional methods struggle with objectivity. A structured, signal-based approach can help improve consistency.